Table of Contents

ToggleWe are very happy and proud to share a quote from the IT PRO portal interview with Artem Koren, Sembly CPO — AI detection tools risk losing the generative AI arms race.

As the popularity and reach of generative AI grows, many are starting to sound alarm bells over the potential for misuse and exploitation. Models like ChatGPT can already generate text that can be passed off as human-generated, and AI-generated media continues to develop rapidly.

If threat actors use the chatbots of tomorrow for phishing emails, to write malware, or for misinformation – and there’s every indication they will – public and private entities must be equipped with tools to flag AI-generated content. The same also applies to detecting plagiaristic content made with chatbots, a rapidly-emerging concern amongst academics.

In January, OpenAI released its classifier, a tool intended to pick out text that was generated by large language models (LLM), whether GPT-4 or a similar programme. The problem? It’s highly inaccurate. OpenAI’s classifier could only label 26% of AI-written text as “likely AI-written” in tests, while it incorrectly identified human-written text as machine-generated 9% of the time. Strings under 1,000 characters are especially tricky to unpick, and the classifier can’t process languages other than English at nearly the same degree of accuracy.

It’s clear OpenAI believes there’s scope for these tools in academia, with the company noting it’s “an important point of discussion among educators” in a blog post. But businesses could also gain a great deal from tools of this kind.

What are the dangers of undetected AI content?

One immediate concern is identifying the sources generative AI tools use. Many popular systems are trained on models that scrape information from the internet. In a black box development scenario with little insight into the data, it can be difficult to reassure companies their intellectual property (IP) hasn’t been used to ‘create’ something else.

There are already concerns that AI threatens the livelihoods of artists, and if systems such as OpenAI’s deep learning model DALL·E 2 or the CompVis Group’s Stable Diffusion draw on licensed works for their output, then firms from all sectors might find it in their interests to establish a method for the granular analysis of AI content.

At PrivSec London, a panel of AI experts urged firms to adopt greater transparency over how their models work to avoid regulatory difficulties down the line. Panel host Tharishni Arumugam, global privacy technology and operations director at Aon noted if firms aren’t already doing so, now’s the time to make sure third-party contracts don’t include clauses allowing them to train AI models using their data.

OpenAI addressed this concern with its recently-announced ChatGPT API, which doesn’t use the data it processes for training purposes unless a company opts in.

This capacity to lie isn’t just problematic for its potential role in generating pro-Russian propaganda, misinformation, and convincing lies that could damage a company’s reputation.

Chatbots like ChatGPT are a source of worry due to their extremely wide user base, the ease with which one can access it, and the large volume of content it can generate at once.

The impending generative AI vulnerability nightmare

These factors could result in a large and well-meaning public using generative AI with damaging consequences. For example, code generated with large language models such as OpenAI’s Codex may contain logic errors that could cause damage to a business’ IT stack down the line.

Stanford University researchers, for example, found developers using AI assistants introduced more security vulnerabilities into their code compared to those who wrote code from scratch. Those in the study who used AI assistants also disproportionately rated their code as more secure, indicating misplaced trust in the ability of such AI tools.

Even when errors in code don’t open up software to zero-day exploits, they can lead to disruptions to normal operations, as seen in Windows Defender’s false reporting over ransomware.

The widespread use of AI assistants could also open companies up to legal challenges given the potential for models to have been unlawfully trained on licensed content. Challenges are already underway, with GitHub Copilot being sued for “software piracy on an unprecedented scale,” in a charge that also contests the legality of OpenAI’s Codex model for generating programming languages.

Due to the ease with which AI-generated code can be obtained, it can be shared on forums, and quickly overwhelm moderators who have little way of reliably identifying whether code is written by humans or machines. This has led to the popular coding forum StackOverflow banning ChatGPT responses entirely.

With this in mind, the development window for creating tools to detect machine-generated code is fast closing. If models develop become sophisticated faster than countermeasures can be designed, businesses could find they have few mechanisms to identify potentially troublesome sources for code such as AI models.

Read the full article at IT PRO Portal.

- Multi-meeting chats

- AI Insights

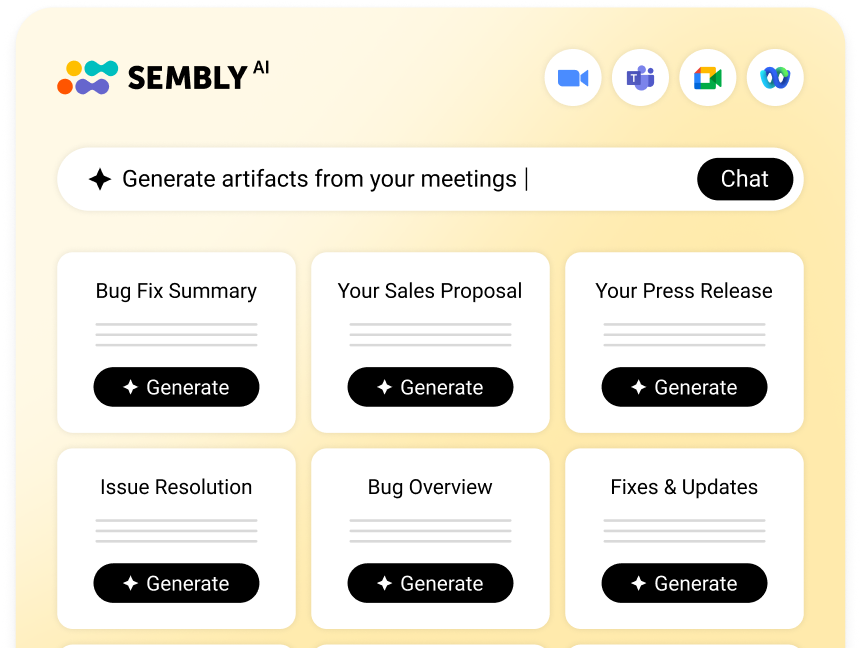

- AI Artifacts